Streamline Clinical Data Management with this 6-Step Process

by Vibeke Brinck | Jan 20, 2021 | Data Management | comments

Clinical data management (CDM), just like its name suggests, is the process of collecting, managing, curating, and analyzing the information in clinical trials.

Every academic investigator and data manager knows just how important that “process of handling information” is to the study. If data management becomes a roadblock in your otherwise groundbreaking research, your likelihood of being able to replicate your results and publish your findings becomes compromised.

Ultimately, the purpose of every clinical study is to get good data. Good data in traumatic brain injury (TBI) studies changes (and often saves) lives.

So, what’s the most effective and fool-proof way to secure high-quality, reliable, and statistically sound data?

By using a centralized clinical data management system for your clinical trials.

In this article, we’ll break down and detail the stages for clinical data management, and the CDM process that has made QuesGen Systems the most trusted research partner for brain health and TBI studies.

But first, a public service announcement on the importance of data security. When researching CDM systems to use for your clinical trial, make sure they are:

The 6 Key Phases of Clinical Data Management

1. Planning and Design

Going into your study, chances are you already have a research protocol that details things like:

- How you’re screening your patients

- What data collection timepoints you have in your study

- The assessments that are necessary at those timepoints

It is important that the technical team works closely with the research team to functionally identify what assessments and patient interactions are necessary at what time and in what order. When you’ve mapped out the flow and timeline for your data collection, the stage is set for preparing the database, forms, and overall plan so it’s easy to put the data in and take the data out. The database and research platform design needs to support and enhance the way that users collect and use data.

2. Database Integration

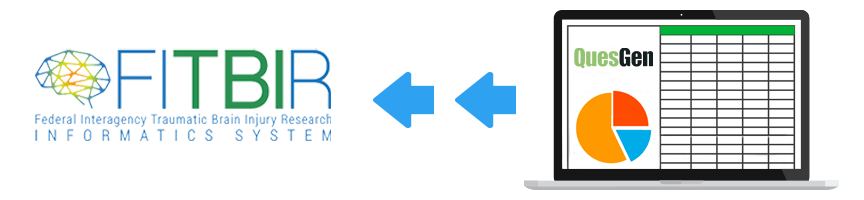

With the flow and timepoints of your study defined and research platform complete, the CDM system can be customized to achieve desired outcomes. But there’s an additional consideration for TBI studies, and it’s something you really don’t want to overlook during the design stage — the Federal Interagency Traumatic Brain Injury Research (FITBIR).

Because the National Institute of Health (NIH) requires all TBI research study data be submitted to FITBIR (warning: it’s a cumbersome and often painstaking process), using a CDM system that allows for easy data extraction and seamless integration with the FITBIR data repository can save a lot of time and headaches.

3. Checking Data Fields for Integrity

Make sure the data you want is being entered correctly in the correct fields. Using on-screen edits and validations on the data prevents the introduction of ‘bad data’ into the study. For example, a date field shouldn’t accept a free text as an input. Dates, free text, integers, decimals, and so on, are all “types” of data that need to be specified at this stage.

For Example, you can set up rules to prevent data entry errors, like:

- The date of a lab procedure can’t be before the date of the patient's injury

- Stop times can't be before start times

- “Alerts/flags” on things that seem unusual (a person entered as 5’2” 700 lbs, for instance)

Depending on the flow and function of your study, a good CDM tool will allow you to set all necessary rules in advance to resolve any issues with your data before they ever arise.

4. Tracking Data Completion and Compliance

All studies will have a goal.

- How many patients are you planning to enroll in a given timeframe?

- What type of patients should you be enrolling?

- Maybe your study requires one-third emergency room discharge / one-third in the hospital / and one-third ICU.

One of the biggest benefits to using a data management tool is the ability to track and monitor progress toward your goal. In order to ensure sound and reliable data based on your final objective, having visibility into each site’s progress (are they recruiting the right patients, are they collecting and entering the right data, etc.) is a critical step in the CDM process.

5. Data Curation

By this step in the CDM process, the database has been implemented, the study is underway, and data is being collected. Hopefully the data you have at this point is clean-“ish”, but there should always be some careful curation of the data.

Centralized data curation allows the study team to analyze data as the study progresses. The use of standard and custom data queries enables the team to examine large sets of data and look for patterns in this data. Any data issues discovered by these queries will go back to the sites to fix questionable data. The curation team will close the loop in a timely manner determining if the site resolution was reasonable. This step often has some back and forth but inevitably promises clean and accurate results.

While we list this step toward the end for a final check, clinical data management best practice should have you analyzing and curating the data throughout the study — identifying gaps, issues, and creating feedback loops between the rules that were set early on and the curation/analysis. Data curation can also be used as a powerful training tool. As researchers uncover patterns in data showing improper entry or collection issues, directed training and outreach can be developed to address these issues.

6. Lock the Data

In the end, the goal is to get the data sets (forms, patient records, entire study) fully curated and as clean as possible. At that point the entire database should be locked for integrity.

Using a CDM Platform to Yield High Quality, Repeatable Results

By using a proven CDM system to streamline and ensure the quality of your clinical data management, each and every critical step listed above is built into the process and taken care of for you.

Designed for users (programming skills not necessary) to rapidly build a database predicated on superior data management, the QuesGen platform assures reliable collection, curation, and availability of study data.